Manuscript accepted on :13-Sep-2018

Published online on: 15-09-2018

Plagiarism Check: Yes

Reviewed by: Sivakumar Ramamurthy

Second Review by:

Final Approval by: Alessandro Leite Cavalcanti

Ayush Dogra , Bhawna Goyal

, Bhawna Goyal and Sunil Agrawal

and Sunil Agrawal

UIET, Panjab University Chandigarh, India.

Corresponding Author E-mail: ayush123456789@gmail.com

DOI : https://dx.doi.org/10.13005/bpj/1482

Abstract

Digital images are an extremely powerful and widely used medium of communication. They are able to represent very intricate details about the world that surrounds us in a very easy, compact and readily available manner. Due to the innate advances in the acquisition devices such as bio-sensors and remote sensors, huge amount of data is accessible for the further processing and information extraction. The need to efficiently process this immense amount of information has given rise to the emergence of the popular disciplines like image processing, image analysis, and computer vision and image fusion. This article gives a brief insight into basic understanding and significance of image fusion.

Keywords

Digital Subtraction Angiography; Image Fusion; Medical Modalities; Multi-Sensor; Pixel-Level

Download this article as:| Copy the following to cite this article: Dogra A, Goyal B, Agrawal S. Medical Image Fusion: A Brief Introduction. Biomed Pharmacol J 2018;11(3). |

| Copy the following to cite this URL: Dogra A, Goyal B, Agrawal S. Medical Image Fusion: A Brief Introduction. Biomed Pharmacol J 2018;11(3). Available from: http://biomedpharmajournal.org/?p=22557 |

Introduction

Image processing can simply be referred to performing some mathematical operations on image pixels, to get an enhanced image with a better visual quality and to extract some useful information. Image fusion is an efficient way retrieving the information from the multiple sources into one image. The combined information enables the visual perception of more comprehensive image.

The complimentary obtained from multiple sensors; multiple foci or multiple views are fused together to generate a new image which cannot be given by the individual imagery or data set. The concept of image fusion finds its applications in various fields.1 For instance, in remote sensing varied type of data is acquired via different sensors to obtain a fused image for instance, fused image with both higher spatial as well as spectral resolution. Other areas of application of image fusion are surveillance, biometrics, defence applications and medical imaging.2 Numerous applications of image fusion have been found in the field of medical imaging. The medical images obtained from different sensors are fused together to enhance the diagnostic quality of the image modality. With the advancements in the field of medical science and technology, medical imaging is able to provide various modes of imagery information.3

Different medical images have some specific characteristics which require simultaneous monitoring for clinical diagnosis. Hence multi-modality image fusion is performed to combine the attributes of various image sensors into a single image.

Image Fusion

In the process of image fusion, the discreet level of information by each and every pixel of an image at a specific instance of time, state, position or circumstances is mapped upon respective information from each pixel of another image of the same object at a different instance or state.4 The varied design and construction of each type of optical sensors put some limitations on the type of information acquired by them. Therefore the process of image fusion develops a composite image which suffices for the data which is not made available by an individual optical sensor. The source of information to be fused may be made available from a single source for different intervals of time or from multiple numbers of sensors over a common time slot.

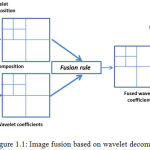

The revolutionary advancement in designing of innovative image fusion tools has sustained due to various signal processing techniques and analysis theory methods which include spatial filters, artificial intelligence machine learning techniques and most importantly multi-scale transforms.5,6 Followed by the decomposition of image features or coefficients using suitable transform method, they are combined using appropriate fusion rules e.g. pixel-level averaging rule, weighted averaging rule and min-max rule. With the help of these image integration tools the image pixels are combined together into a highly representational format. The block diagram of a pixel level image fusion process employing wavelet transform and pixel-level averaging fusion rule is illustrated to give a basic insight into image fusion system in Fig. 1.1.

|

Figure 1.1: Image fusion based on wavelet decomposition.

|

From Fig. 1.1 it can be understood that the basic methodology of image fusion in transform domain.

Mapping of the image pixel intensities of the source into the coefficients in the transform domain.

Combining the transformed coefficients with some fusion rule, for instance pixel-level averaging method.

Inverse transformation of the fusion coefficients to generate the final fused image.

Types of Image Fusion

The image fusion can be categorised differently depending on the type of source data to be fused or on type of image sensors employed and according to the fusion purpose.

Multi-view Fusion: It is the fusion of the single type of modality taken at the same time but at different conditions and from different angles. It is aimed at providing information from supplementary views. For instance, the view of a scenery can be taken from foreground and background view and can be fused together to obtain a single multi-view image.7

Multi-modal Fusion: In this case, captured via different sensors are fused together to integrate the information present in complimentary images.8

Multi-temporal Fusion: It is the type of fusion in which the images of the same view or the modality are taken at different times, and are fused together with an aim to detect the significant changes with respect to time.9

Multi-focus Fusion. In this type of image fusion, images taken at the same time of the same scene with different areas or objects in focus are fused to get all the information in a single image. For instance, image of an object with a single part in focus is fused with an image containing other parts of the object in focus.10

Classification of Image Fusion

In literature, image fusion has been carried out in the different manners: pixel, feature level and decision level.

Pixel level fusion: In image fusion based on pixel level, each pixel in the fused image acquires a value which is based on the pixel values of each of the source image. It is most basic type of image fusion performed at signal level.

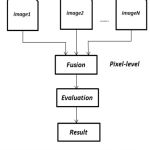

There are numerous methods reported in literature for pixel level fusion. This type of image fusion can be carried out at spatial level as well using various transforms. At spatial level it is performed by linear or non-linearly merging the pixel intensity value of the respective images. One of the simplest examples of these methods is to compute the averaging the pixel intensity values. The physical parameter i.e. pixel intensity values in each of the source images is combined together using an appropriate fusion rule to generate a new fused images with a different set of values of image pixel intensities.11 The pixel level image fusion can be achieved with various combinations of mutli-scale decomposition methods and it is discussed in the later section of this dissertation. A simple illustration of pixel level image fusion can be seen in Fig. 1.2.

|

Figure 1.2: Schematic for pixel level image fusion.

|

In the figure given above, the step wise methodology of pixel level image fusion depicting the fusion of co-registered source images is shown. The quality of the fused image is calculated using various evaluation parameters e.g. QAB/F factor, mutual information, entropy etc. The detailed discussion of these objective evaluation metrics will be done in the later section of this thesis.

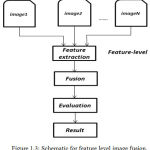

Feature level fusion: It is performed at feature level and involves the mining of the detailed information or features from images. Feature level fusion is the higher level processing of the information to generate data with higher amount of information. In this type of fusion the extracted information from the images is integrated using complex techniques. One of the simplest example of feature level image fusion is region based fused. The region of interests are extracted and then fused to obtain the fusion of specific features of the images.12 The process of feature level image fusion can be understood from Fig. 1.3.

|

Figure 1.3: Schematic for feature level image fusion.

|

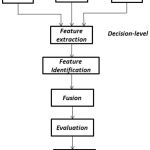

Decision level fusion: It means to merge the information at a higher level of generalization, combines the results from a set of algorithms to lay a final fused decision. Input images are processed one by one to extract information as shown in Fig. 1.4, which is then fused by applying decision rules to reinforce the otherwise common interpretation. In decision level fusion, locally classified data from several source images are combined to determine the final decision. Initially the each of the source images is pre-processed for extraction of information. Then decision rules with varied degree of confidence are employed to combine the information extracted to grasp a superior perception of the object under observation. Decision level image fusion finds its applications in varied fields such as finger print verification, biometrics and several other remote sensing applications.13

|

Figure 1.4: Schematic for decision level image fusion.

|

Medical Image Fusion

Image fusion is an important branch of image processing which is being extensively worked upon by researchers. However an image is a special form of signal which has its own complexity, diversity and unique behaviour in the following aspects:

Image registration is a pre-requisite in the process of image fusion. The co-registered images highly intensify the quality of the fused image.

In case of multisensory image fusion, the sensor resolutions are different. Therefore in pixel level image fusion pre-processing of images is a necessary step.

The process of image fusion largely depends on the correlation amongst the pixels in the source images.

The fusion of images with lower degree of spatial correlation or dissimilar images (in case of multi-sensory image fusion) is quite challenging. Also different types of fusion rules yield different results on various data sources. Therefore to devise an application specific image fusion algorithm for a specific data source is quite thought provoking.

In context of medical imaging, the combined analysis of various medical modalities has made image fusion one of the readily employed medical diagnostic tools. In case of parallel analysis of medical imaging modalities medical practitioners have to switch glances in between. Image fusion facilities the joint analysis of the modalities abridging the time between diagnosis and patient treatment. Henceforth the image integration an efficient method of collecting the information from varied type of sensors which help enforcing an information decision. The integration of different medical image modalities together acts as an efficient diagnostic tool by providing the complimentary information in a single image.14-20

For integrated visualization of abdomen and stomach related problems CT and ECT (Emission Computed Tomography). CT and MRI images are combined together for skull based tumor surgery. To confirm optimized blood arterial and vascular blood flow, MRI and Ultrasound images are often fused together. Besides this, the fusion of various types of imaging modalities helps in precise and accurate determination stages of cancer and response of ionizing radiations on tumor. These are most extensively worked upon multi-modality imaging systems.21

Another major field of application in image fusion is bone vessel image fusion which is carried out with the help of Digital Subtraction Angiography. The DSA images are through fluoroscopic X-Ray imaging. In this process, mask (pre-contrast) image and contrast (post injection of fluoroscopic material) images are obtained. The DSA imagery is acquired by digital subtraction osseous and vascular information. The DSA image dominant in vascular information is fused upon he mask image carrying the osseous i.e. bone information. The domain of image fusion is rather originating as an important field of research and development.

![Figure 1.5: Schematic of digital subtraction angiography (DSA) [22].](https://biomedpharmajournal.org/wp-content/uploads/2018/09/Vol11No3_Med_Ayu_fig1.5-150x150.jpg) |

Figure 1.5: Schematic of digital subtraction angiography (DSA) [22].

|

DSA finds its extended range of application in determining the presence and extent of vascular abnormalities, aneurysms, occlusions, ulcerated plaques and stenoses. It helps a great deal in planning various surgical procedures and thus has served as an important paradigm for speedy patient care and treatment.Hence this serves as an excellent potential field of research conducted higher level of precision and accuracy.

Conclusion

The highly reputed medical institutes in India namely AIIMS Delhi, PGIMER Chandigarh and various other private and government medical colleges use highly expensive medical image fusion tool which are imported from outside India. Hence these tools become increasingly unaffordable for small scale clinics or hospital specifically in the rural areas. Therefore, there is an immense need of low cost indigenous tool in these areas which can supplement the requirement of this highly expensive equipment. Moreover, the design of this computer aided tools could make this technology accessible for the remote areas of the nation at completely affordable prices. The fusion of osseous and vascular imagery data is difficult owing to their nature of being dissimilar images. Most of the algorithms available in literature are designed for almost similar images. The DSA and mask images possess high degree of dissimilarity. Therefore designing an efficient fusion rule for this type of source data is quite a motivation. At the same time, these image fusion rules should also be able to give comparable results for fusion of similar source images. This manuscript aimed at equipping the reader’s with a general understanding of image fusion and its relevance in present day scenario.

References

- Blum S and Liu Z., Eds., Multi-Sensor Image Fusion and Its Applications. Boca Raton, FL, USA: CRC Press. 2005.

- Cle P and John L., Genderen V. Review article multisensor image fusion in remote sensing: concepts, methods and applications. International journal of remote sensing. 1998;19(5) 823-854.

CrossRef - Wang Z and Ma Y. Medical image fusion using m-PCNN. Information Fusion. 2008;9(2):176-185.

CrossRef - Jiang D., Zhuang D., Huang Y and Jingying F. Advances in multi-sensor data fusion: Algorithms and applications. Sensors. 2009;9(10):7771-7784.

CrossRef - Li H., Manjunath B.S and Mitra S.K. Multisensor image fusion using the wavelet transform. Graphical models and image processing. 1995;57(3):235-245.

CrossRef - Sahu D. K and Parsai M. P. Different image fusion techniques–a critical review. International Journal of Modern Engineering Research (IJMER). 2012;2(5):4298-4301.

- Shah S. N. M and Desai U. B. Multi focus and multi spectral image fusion based on pixel significance using multi resolution decomposition. Signal, Image and Video Processing. 2013;7(1):95-109.

CrossRef - Pohl J. L., Genderen V. Review article multisensor image fusion in remote sensing: concepts, methods and applications. International Journal of Remote Sensing. 1998;19(5):823-854.

CrossRef - Dai and Khorram S. Data fusion using artificial neural networks: a case study on multitemporal change analysis. Computers Environment and Urban Systems. 1999;23(1):19-31.

CrossRef - Shutao L., James T. K and Wang Y. Multi focus image fusion using artificial neural networks. Pattern Recognition Letters. 2002;23(8):985-997.

CrossRef - Hamza B., He Y., Krim H and Willsky A. A multi scale approach to pixel-level image fusion. Integrated Computer-Aided Engineering. 2005;12(2):135-146.

CrossRef - Whyte D and Henderson T. C. Multi-sensor data fusion. Book Chapter in Springer Handbook of Robotics. 2008;585-610.

- Salil P and Jain A. K. Decision-level fusion in fingerprint verification.Pattern Recognition. 2002;35(4):861-874.

CrossRef - Ayush D., Agrawal S and Goyal B. Efficient representation of texture details in medical images by fusion of Ripplet and DDCT transformed images. Tropical Journal of Pharmaceutical Research. 2016;15(9):1983-1993.

- Ayush D., Agrawal S., Goyal B., Khandelwal N and Kamal C. A. Color and grey scale fusion of osseous and vascular information. Journal of computational science. 2016;17:103-114.

CrossRef - Ayush D., Goyal B., Agrawal S and Kamal C. A. Efficient fusion of osseous and vascular details in wavelet domain. Pattern Recognition Letters. 2017;94:189-193.

CrossRef - Ayush D., Goyal B and Agrawal S . From multi-scale decomposition to non-multi-scale decomposition methods a comprehensive survey of image fusion techniques and its applications. IEEE Access. 2017;5:16040-16067.

CrossRef - Bhawna G., Dogra A., Agrawal S and Sohi B. S. Dual way residue noise thresholding along with feature preservation. Pattern Recognition Letters. 2017;94:194-201.

CrossRef - Bhawna A. G, Dogra A and Agrawal S. An Efficient Image Denoising Scheme for Higher Noise Levels using Spatial Domain Filters. Biomedical and Pharmacology Journal. 2018;11(2):625-634.

CrossRef - Jyotica Y, Dogra A ., Goyal B and Agrawal S. A review on image fusion methodologies and applications. Research Journal of Pharmacy and Technology. 2017;10(4):1239.

CrossRef - El-Gamal F. E. Z. A., Elmogy M and Atwan A. Current trends in medical image registration and fusion. Egyptian Informatics Journal. 2016;17(1):99-124.

CrossRef - Patrick A. T., William J. Z, Charles M. S., Andrew B. C., Gastone G. C and Joseph F. S. Limitations of intravenous digital subtraction angiography. American Journal of Neuroradiology. 1983;4(3):271-273.